Database Schema Migration Best Practices | Challenges and Principles Focused

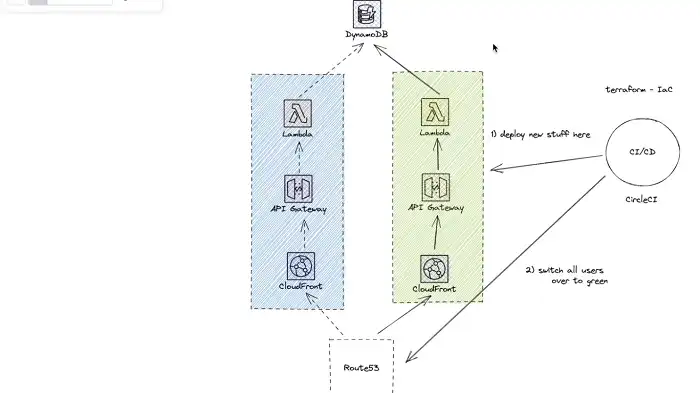

Ensuring smooth database schema changes involves various strategies. Planned maintenance with downtime allows coordinated changes but brings significant downtime and potential disruptions. Blue/Green deployments minimize downtime but pose challenges for evolving schemas.

Feature flags decouple functionality deployment, supporting a seamless transition. Canary releases test changes on a subset of clients to detect issues early. The expand and contract pattern deploys the new schema gradually, providing safety layers but requiring meticulous planning.

Whether you’re just starting out or a few steps into the migration process, this handbook should come in handy.

Challenges with Database-Related Deployment Strategies

Migrating your database schema often poses challenges that require careful consideration. A few of them are as follows:

Successfully Migrating Existing Data

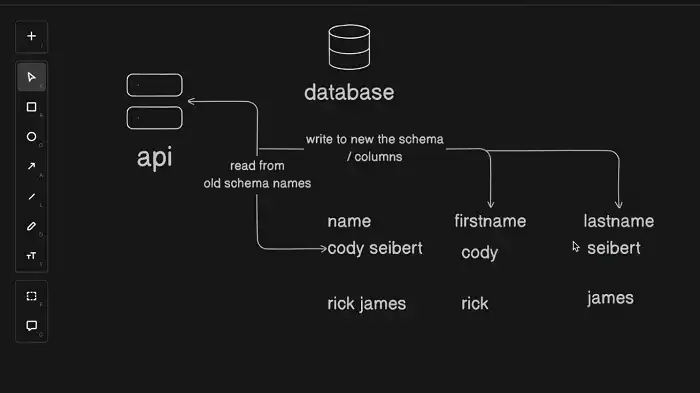

Modifying the database structure often involves adapting existing data to conform to the new schema. For complex operations like column splitting or combining, custom data transformations are necessary. Handling large datasets during migration can introduce time constraints and performance considerations.

Making Changes Reversible

Ensuring changes are reversible is challenging, especially when data structures are populated by live code. Prioritizing “rolling forward” over “rolling back” is crucial to maintaining data integrity, even in the event of behavior reversion.

Testing Schema Changes

Testing schema changes requires understanding the best way to capture edge cases and validate new data formats. Real-world data often pushes against constraints unexpectedly, emphasizing the need for comprehensive testing, including stored procedures and triggers.

Performance and Availability Impact

Schema changes can significantly impact performance and availability. Emulating these impacts in test environments may not fully mirror production values, making it difficult to predict potential issues accurately.

What Are the Principles of Database Schema Migration?

Understanding the principles of database migration is essential for a successful schema transition. These principles include careful planning, comprehensive testing, prioritizing gradual changes over abrupt modifications, and incorporating the following essential practices:

Backup Data

Before initiating any schema migration, it’s crucial to create a backup of the existing database. This step acts as a safety net in case anything goes wrong during the migration process. Regular backups also provide a way to restore the system to a previous state if necessary.

Version Control

Implement version control for database schemas using tools like Git. Storing schema changes alongside application code allows for better coordination between developers and ensures that everyone is working with the same version of the database schema. This practice helps track changes, roll back to previous versions if needed, and maintain a clear history of schema modifications.

Use Migration Scripts

Instead of manually altering the database schema, use migration scripts. These scripts contain a series of instructions that define how the database schema should change. By using scripts, you can automate the application of schema changes, making the process more repeatable and reducing the likelihood of human error.

Test Thoroughly

Create a set of test cases that cover various aspects of the application affected by the schema changes. This includes testing data migration, ensuring that existing functionalities remain intact, and verifying the performance of the database after the migration. Automated testing tools can be employed to streamline this process.

Implement Rollback Procedures

No matter how well you plan, there’s always a chance that something could go wrong during a migration. Implement robust rollback procedures to revert to the previous state quickly and effectively. This might involve having rollback scripts or other mechanisms in place to undo the changes made during the migration.

Schedule Downtime Wisely

If your application experiences regular usage, schedule schema migrations during periods of low activity to minimize the impact on users. Communicate the downtime schedule to users and stakeholders in advance. For applications requiring availability, consider techniques like blue-green deployments or canary releases to minimize downtime.

Monitor Performance

Keep a close eye on the performance of the database during and after migration. Monitoring tools can help identify performance bottlenecks, allowing you to address issues promptly. Performance testing should be an integral part of your migration strategy to ensure that the database can handle the load effectively.

Collaborate and Communicate

Effective communication and collaboration among team members are crucial during schema migrations. Ensure that all stakeholders, including developers, database administrators, and system operators, are aware of the migration plan and its potential impact.

Document Changes

Maintain detailed documentation of all schema changes. This documentation should include the purpose of each change, the corresponding migration script, and any dependencies or considerations for other parts of the system. This documentation becomes invaluable for future reference and audits.

Regularly Review and Refine Processes

After completing a schema migration, conduct an analysis. Identify what worked well and areas that need improvement. Use this feedback to refine and optimize your schema migration process continuously. This iterative approach helps the team learn and adapt, leading to more efficient and reliable migrations.

What Are the Best Practices for Database Schema Changes?

Here, we have listed the best practices that, when followed, can guarantee a seamless transition during schema changes.

Planned Maintenance: Upgrading Schemas with Downtime

One strategy involves taking the database offline during migration, treating downtime as a cost. While suitable for certain cases, it may not align with organizations prioritizing high availability.

Advantages:

- Coordinated changes with client code.

- Inspection and testing without live performance impact.

- Simplicity and minimal infrastructure requirements.

Disadvantages:

- Significant downtime impact.

- Potential for unforeseen problems and cascading disruptions.

Blue/Green Deployments

Blue/Green deployments, commonly used for code changes, involve setting up identical infrastructure to manage production traffic. While effective for minimizing downtime, applying this to schema changes may present challenges, especially when data schemas evolve.

Feature Flags

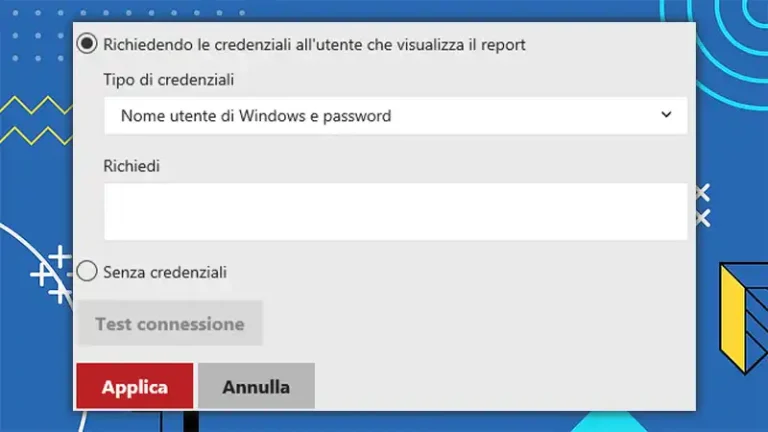

Feature flags allow developers to modify application behavior at runtime based on externally set values. In the context of schema changes, feature flags facilitate the decoupling of deploying new functionality from its activation, supporting a smooth transition.

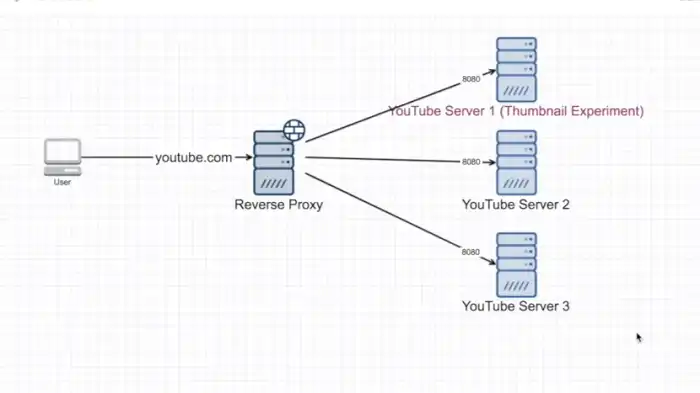

Canary Releases

Canary releases involve introducing changes on a small subset of clients before deploying to the entire infrastructure. This strategy minimizes risks by allowing early detection of issues and gradual rollout to additional systems.

Expand and Contract Pattern

The expand and contract pattern stands out as a comprehensive method for introducing schema changes. This approach involves deploying the new schema alongside the original, migrating data gradually, and transitioning production traffic in planned stages.

Advantages:

- Gradual transition over an extended period.

- Decoupling reading and writing from the new data schema.

- Multiple layers of safety for potential issues.

Disadvantages:

- Requires careful planning and coordination.

What is the difference between data migration and ETL?

Data migration and ETL, though initially similar, differ in their applications. Data migration suits the transfer of entire databases, while ETL excels with limited or specific datasets.

What are the steps in migrating to the new database?

Steps include designing the new schema, adapting existing data, testing thoroughly, and gradually transitioning production traffic, alongside essential practices like backup, version control, and comprehensive testing.

What are the migration techniques for SQL databases?

Techniques include using tools like Liquibase or Flyway, versioning changes, and implementing transactional scripts for data consistency, alongside essential practices like backup, version control, and comprehensive testing.