How I Use Terraform & Composer to Automate WordPress on AWS

How I setup wordpress to deploy automatically on aws

You want to make your wordpress site bulletproof? No server outage worries? Want to make it faster & more reliable. And also host on cheaper components?

I was after all these gains & also wanted to kick the tires on some of Amazon’s latest devops offerings. So, I plotted a way forward to completely automate the deployment of my blog, hosted on wordpress.

Here’s how!

The article is divided into two parts…

Deploy A WordPress Site On Aws – Decouple Assets (Part 1)

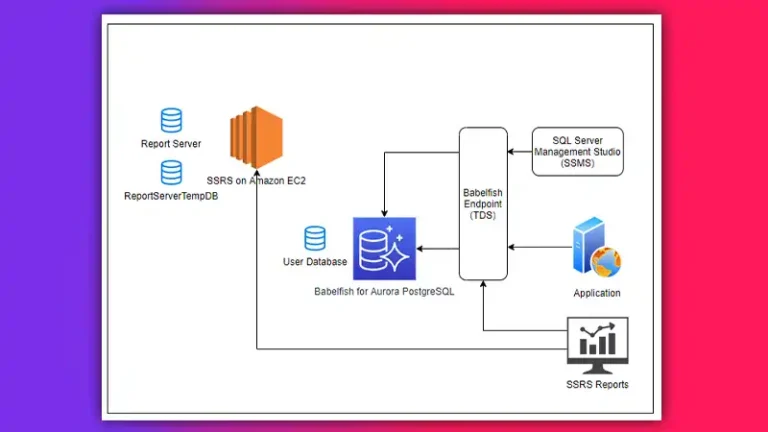

In this one I decouple the assets from the website. What do I mean by this? By moving the db to its own server or RDS of even simpler management, it means my server can be stopped & started or terminated at will, without losing all my content. Cool.

You’ll also need to decouple your assets. Those are all the files in the uploads directory. Amazon’s S3 offering is purpose built for this use case. It also comes with easy cloudfront integration for object caching, and lifecycle management to give your files backups over time. Cool!

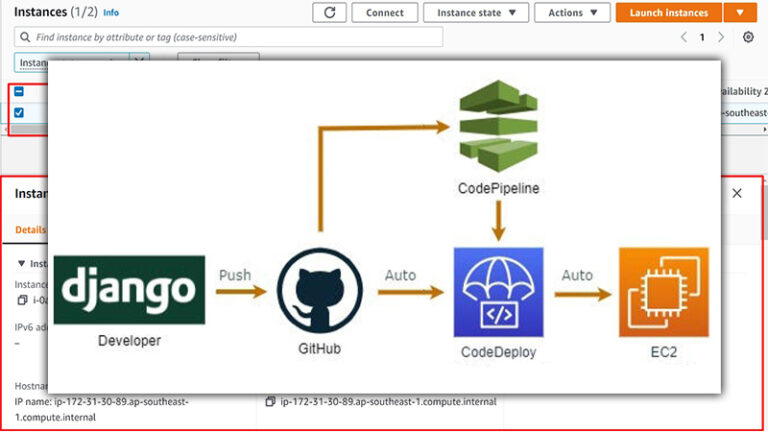

Deploy A WordPress Site On Aws – Automate (Part 2)

The second part we move into all the automation pieces. We’ll use PHP’s Composer to manage dependencies. That’s fancy talk for fetching wordpress itself, and all of our plugins.

1. Isolate Your Config Files

Create a directory & put your config files in it.

$ mkdir iheavy$ cd iheavy$ touch htaccess$ touch httpd.conf$ touch wp-config.php$ touch a_simple_pingdom_test.php$ touch composer.json$ zip -r iheavy-config.zip *$ aws s3 cp iheavy-config.zip s3://my-config-bucket/In a future post we’re going to put all these files in version control. Amazon’s CodeCommit is feature compatible with Github, but integrated right into your account. Once you have your files there, you can use CodeDeploy to automatically place files on your server.

We chose to leave this step out, to simplify the server role you need, for your new EC2 webserver instance. In our case it only needs S3 permissions!

Also: When devops means resistance to change

2. Build Your Terraform Script

Terraform is a lot like Vagrant.

The terraform configuration formalizes what you are asking of Amazon’s API. What size instance? Which AMI? What VPC should I launch in? Which role should my instance assume to get S3 access it needs? And lastly how do we make sure it gets the same Elastic IP each time it boots?

All the magic is inside the terraform config.

Here’s what I see:

levanter:~ sean$ cat iheavy.tf

resource "aws_iam_role" "web_iam_role" { name = "web_iam_role" assume_role_policy = And here’s what it looks like when I ask terraform to build my infrastructure:

levanter:~ sean$ terraform applyaws_iam_instance_profile.web_instance_profile: Refreshing state... (ID: web_instance_profile)aws_iam_role.web_iam_role: Refreshing state... (ID: web_iam_role)aws_s3_bucket.apps_bucket: Refreshing state... (ID: iheavy)aws_iam_role_policy.web_iam_role_policy: Refreshing state... (ID: web_iam_role:web_iam_role_policy)aws_instance.iheavy: Refreshing state... (ID: i-14e92e24)aws_eip.bar: Refreshing state... (ID: eipalloc-78732a47)aws_instance.iheavy: Creating... ami: "" => "ami-1a249873" availability_zone: "" => "" ebs_block_device.#: "" => "" ephemeral_block_device.#: "" => "" iam_instance_profile: "" => "web_instance_profile" instance_state: "" => "" instance_type: "" => "t1.micro" key_name: "" => "iheavy" network_interface_id: "" => "" placement_group: "" => "" private_dns: "" => "" private_ip: "" => "" public_dns: "" => "" public_ip: "" => "" root_block_device.#: "" => "" security_groups.#: "" => "" source_dest_check: "" => "true" subnet_id: "" => "subnet-1f866434" tenancy: "" => "" user_data: "" => "ca8a661fffe09e4392b6813fbac68e62e9fd28b4" vpc_security_group_ids.#: "" => "1" vpc_security_group_ids.2457389707: "" => "sg-46f0f223"aws_instance.iheavy: Still creating... (10s elapsed)aws_instance.iheavy: Still creating... (20s elapsed)aws_instance.iheavy: Creation completeaws_eip.bar: Modifying... instance: "" => "i-6af3345a"aws_eip_association.eip_assoc: Creating... allocation_id: "" => "eipalloc-78732a47" instance_id: "" => "i-6af3345a" network_interface_id: "" => "" private_ip_address: "" => "" public_ip: "" => ""aws_eip.bar: Modifications completeaws_eip_association.eip_assoc: Creation completeApply complete! Resources: 2 added, 1 changed, 0 destroyed.

The state of your infrastructure has been saved to the path

below. This state is required to modify and destroy your

infrastructure, so keep it safe. To inspect the complete state

use the `terraform show` command.

State path: terraform.tfstatelevanter:~ sean$ Also: Is Amazon too big to fail?

3. Use Composer to Automate WordPress Install

There is a PHP package manager called composer. It manages dependencies and we depend on a few things. First WordPress itself, and second the various plugins we have installed.

The file is a JSON file. Pretty vanilla. Have a look:

{ "name": "acme/brilliant-wordpress-site", "description": "My brilliant WordPress site", "repositories":[ { "type":"composer", "url":"https://wpackagist.org" } ], "require": { "aws/aws-sdk-php":"*", "wpackagist-plugin/medium":"1.4.0", "wpackagist-plugin/google-sitemap-generator":"3.2.9", "wpackagist-plugin/amp":"0.3.1", "wpackagist-plugin/w3-total-cache":"0.9.3", "wpackagist-plugin/wordpress-importer":"0.6.1", "wpackagist-plugin/yet-another-related-posts-plugin":"4.0.7", "wpackagist-plugin/better-wp-security":"5.3.7", "wpackagist-plugin/disqus-comment-system":"2.74", "wpackagist-plugin/amazon-s3-and-cloudfront":"1.1", "wpackagist-plugin/amazon-web-services":"1.0", "wpackagist-plugin/feedburner-plugin":"1.48", "wpackagist-theme/hueman":"*", "php": ">=5.3", "johnpbloch/wordpress": "4.6.1" }, "autoload": { "psr-0": { "Acme": "src/" } }

}Read: Is aws a patient that needs constant medication?

4. Build Your User-Data Script

This captures all the commands you run once the instance starts. Update packages, install your own, move & configure files. You name it!

#!/bin/sh

yum update -yyum install emacs -yyum install mysql -yyum install php -yyum install git -yyum install aws-cli -yyum install gd -yyum install php-gd -yyum install ImageMagick -yyum install php-mysql -y

yum install -y httpd24 service httpd startchkconfig httpd on

# configure mysql password fileecho "[client]" >> /root/.my.cnfecho "host=my-rds.ccccjjjjuuuu.us-east-1.rds.amazonaws.com" >> /root/.my.cnfecho "user=root" >> /root/.my.cnfecho "password=abc123" >> /root/.my.cnf

# install PHP composerexport COMPOSE_HOME=/rootecho "installing composer..."php -r "copy('https://getcomposer.org/installer', 'composer-setup.php');"php -r "if (hash_file('SHA384', 'composer-setup.php') === 'e115a8dc7871f15d853148a7fbac7da27d6c0030b848d9b3dc09e2a0388afed865e6a3d6b3c0fad45c48e2b5fc1196ae') { echo 'Installer verified'; } else { echo 'Installer corrupt'; unlink('composer-setup.php'); } echo PHP_EOL;"php composer-setup.phpphp -r "unlink('composer-setup.php');"mv composer.phar /usr/local/bin/composer

# fetch config files from private S3 folderaws s3 cp s3://iheavy-config/iheavy_files.zip .

# unzip filesunzip iheavy_files.zip

# use composer to get wordpress & pluginscomposer update

# move wordpress softwaremv wordpress/* /var/www/html/

# move pluginsmv wp-content/plugins/* /var/www/html/wp-content/plugins/

# move pingdom testmv a_simple_pingdom_test.php /var/www/html

# move htaccessmv htaccess /var/www/html/.htaccess

# move httpd.confmv iheavy_httpd.conf /etc/httpd/conf.d

# move our wp-config into placemv wp-config.php /var/www/html

# restart apacheservice httpd restart

# allow apache to create uploads & any files inside wp-contentchown apache /var/www/html/wp-contentYou can monitor things as they’re being installed. Use ssh to reach your new instance. Then as root:

$ tail -f /var/log/cloud-init.logRelated: Does Amazon eat it’s own dogfood?

5. Time to Test

Visit the domain name you specified inside your /etc/httpd/conf.d/mysite.conf

You have full automation now. Don’t believe me? Go ahead & TERMINATE the instance in your aws console. Now drop back to your terminal and do:

$ terraform applyTerraform will figure out that the resources that *should* be there are missing, and go ahead and build them for you. AGAIN. Fully automated style!

Don’t Forget Your Analytics Beacon Code

Hopefully, you remember how your analytics is configured. The beacon code makes an API call every time a page is loaded. This tells google analytics or other monitoring systems what your users are doing, and how much time they’re spending & where.

This typically goes in the header.php file. We’ll leave it as an exercise to automate this piece yourself!

Conclusion

That’s all about how to automate the deployment of your WordPress site on AWS using Terraform and Composer. In part one, it is explained how to decouple your assets by moving your database to its own server or RDS and using Amazon’s S3 for all your file uploads. In part two, you can learn about how to automate the process using Composer to manage dependencies and Terraform to formalize your requests to Amazon’s API. With these tools, you can make your site more reliable, faster, and hosted on cheaper components.