Did MySQL & Mongo Have a Beautiful Baby Called Aurora?

Amazon recently announced RDS Aurora a new addition to their database as a service offering.

Here’s Mark Callaghan’s take on what’s happening under the hood and thoughts from Fusheng Han.

Amazon is uniquely positioned with RDS to take on offerings like Clustrix. So, it’s definitely worth reading Dave Anselmi’s take on Aurora.

Features of Aurora

Here are what you can get from Aurora:

1. Big Availability Gains

One of the big improvements that Aurora seems to offer is around availability. You can replicate with Aurora, or alternatively with MySQL binlog type replication as well. They’re also duplicating data two times in three different availability zones for six copies of data.

All this is done over their SSD storage network which means it’ll be very fast indeed.

Read: What’s best RDS or MySQL? 10 Use Cases

2. SSD Means 5x Faster

The Amazon RDS Aurora FAQ claims it’ll be 5x faster than equivalent hardware, but making use of its proprietary SSD storage network. This will be a welcome feature to anyone already running on MySQL or MySQL for RDS.

Also: Is MySQL talent in short supply?

3. Failover Automation

Unplanned failover takes just a few minutes. Here customers will really be benefiting from the automation that Amazon has built around this process. Existing customers can do all of this of course, but typically require operations teams to anticipate & script the necessary steps.

Related: Will Oracle Kill MySQL?

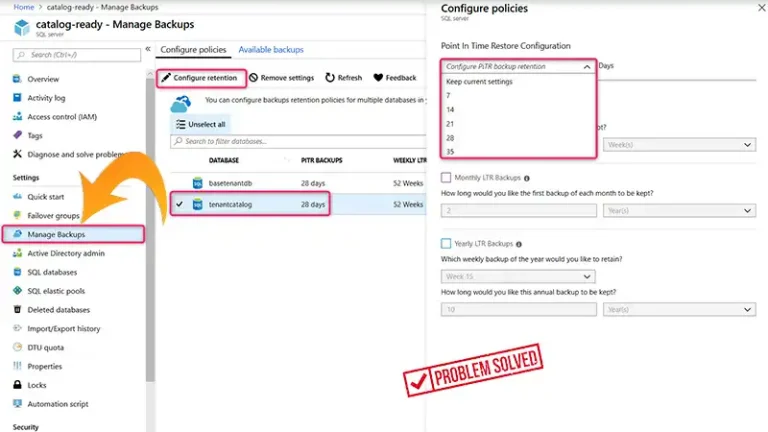

4. Incremental Backups & Recovery

The new Aurora supports incremental backups & point-in-time recovery. This is traditionally a fairly manual process. In my experience MySQL customers are either unaware of the feature, or not interested in using it due to complexity. Restore last nights backup and we avoid the hassle.

I predict automation around this will be a big win for customers.

Check out: Are SQL Databases dead?

5. Warm Restarts

RDS Aurora separates the buffer cache from the MySQL process. Amazon has probably accomplished this by some recoding of the stock MySQL kernel. What that means is this cache can survive a restart. Your database will then start with a warm cache, avoiding any service brownout.

I would expect this is a feature that looks great on paper, but one customer will rarely benefit from it.

See also: The Myth of Five Nines – Is high availability overrated?

Unanswered Questions

The FAQ Says Point-In-Time Recovery Up to The Last Five Minutes. What Happens to Data in Those Five Minutes?

Presumably aurora duplication & read-replicas provide this additional protection.

If Amazon Implemented Aurora as A New Storage Engine, Doesn’t That Mean New Code?

As with anything your mileage may vary, but Innodb has been in the wild for many years. It is widely deployed and thus tested in a variety of environments. Aurora may be a very new experiment.

Conclusion

So, that’s all about Aurora, the baby of MySQL and Mongo and we hope you’ve found this guide helpful on learning about it. For further queries regarding this topic, feel free to ask in our comment section below. Thanks for reading!