5 Things You Overlooked With Mysql Dumps

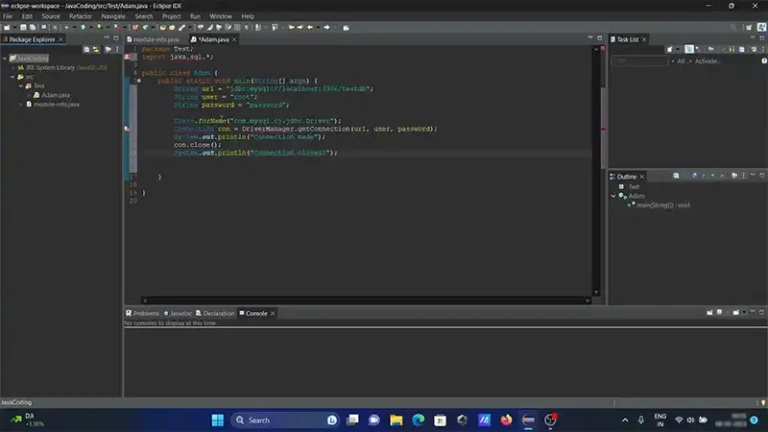

You may already know that when the mysqldump client utility performs logical backups, it produces a set of SQL statements that you can execute to reproduce the original database object definitions and table data.

Although it dumps one or more MySQL databases for backup or transfer to another SQL server, there are 5 things that you may overlook with MySQL dumps.

Here I’ve discussed 5 things you shouldn’t overlook with MySQL dumps. Let’s explore them below.

5 Things You Need to Concentrate About MySQL Dumps

Here are the five things you may overlook with MySQL dumps:

1. Point In Time Recovery

If you’ve never done point-in-time recovery, it’s time to take a second look. With a standard mysqldump you restore your database to the time when the backup happened. Only do them once a day, then you can lose as much as 24 hours of data.

Enter point-in-time recovery, and you have the option to restore all those transactions that occured since your backup last night. Those changes, INSERTs, UPDATEs, DELETEs & even ALTER TABLEs are all stored in the binary logs. That’s right, all those statements are preserved for you!

The tool to fetch those statements with is called mysqlbinlog. When you use it, you’ll need to specify the binlog file & offset to start at. Uh oh, you don’t have those?

That’s where the mysqldump option –master-data comes in. We recommend using –master-data=2 option. That includes these important nuggets of information in the dump file as a comment.

Now you’ll know where to start applying transactions. Simply dig this information out of the dump file.

What I like to do is dump the statements into a text file first, then apply them.

Here’s how you fetch all the transactions since your dump:

$ mysqlbinlog --offset=12345 /var/lib/mysql/binlog.000005 > my_point_in_time_data.mysql

Here’s how you would then apply them:

$ mysql < my_point_in_time_data.mysq2. Locking & Consistency

MySQL can be confusing when it comes to concurrency and locking. Do we need to lock tables during a backup, yes, no? Sometimes? The confusion is because MyISAM tables are non-transactional. So sometimes locking all tables is required to get a consistent dump.

Lock-All-Tables

This option is only required if your application works on tables across schemas. So, if any one application hits multiple schemas and there are MyISAM tables in both, you’ll need to use this option. It’s equivalent to FLUSH TABLES WITH REACH LOCK.

Lock Tables

This option locks all tables in one schema, performs backup, then moves on to the next schema. You only need it if you have MyISAM tables. It’s on by default and with –opt flag.

Single-Transaction

This is the least invasive way to do a dump of your data. However it only works if all your tables are InnoDB. Keep in mind it won’t error out if you have MyISAM tables, but your backup will be inconsistent. The plus side is that from your application’s perspective there is really no blocking locks happening. Very cool indeed!

3. Backups of Stored Code

If you have stored procedures, triggers or functions, you’re not capturing them in your dumps by default. Really! Use the –routines option to include them. Better yet do a dump with just your stored code.

This line will do so, and add a date timestamp for you. Be sure to have the [client] or [mysql] section in your .my.cnf file with user & password specified. Then you don’t need them on the command line.

$ mysqldump --no-data --no-create-info --all-databases --routines > db_code_`/bin/date +%m%d%Y`.mysqlAre you verifying replication slaves? You should know they regularly drift out of sync without throwing errors.

4. Separate Schema & Data Dumps

Sometimes it’s useful to have a schema-only backup. That is a dump of the objects in your database (CREATE statements) without any of the data. Why you ask? For starters, you could use the Unix “diff” command between a schema dump today, and one you did last month.

That’s a cool use case, but there are others. Suppose you want to build a test server, without all the production data or some subset of it? Again, a schema-only dump is your friend.

- Use unix diff to compare objects in two different databases

- Audit schema changes over time

- Build test servers without all the production data

- Parallel loading of data

- use multiple mysql processes to import data

- break up the data only portion of your backup

Here’s the syntax to get the schema-only dump:

$ mysqldump --no-data --all-databases > db_schema_`/bin/date +%m%d%Y`.mysqlHere’s how you get the data-only dump:

$ mysqldump --no-create-info --all-databases --complete-insert --skip-quote-names > db_data__`/bin/date +%m%d%Y`.mysql5. A Few More Options

Complete-Insert

By default, mysqldump includes INSERT statements built to *follow* create statements. So, they assume all the columns match. If you break up your schema & data you could potentially have a mismatch.

This option makes sure the INSERT statements included in the dump specify column names. So if they don’t match, you’ll get a proper error message.

Skip-Quote-Names

MySQL’s default dump settings will quote everything under the sun. That’s to protect if you use certain reserved words or worse if you have objects with spaces in the names.

But of course, best practices advise against *both* of those in your database, so be advised. This option will make your dump much more readable and easier to edit.

Hex-Blob

We had a customer who was using the cross-platform media publishing system K4. When we moved data from one server to another, we had a terrible time at first. That’s because this enterprise application stores data in MySQL blobs.

Unfortunately, the default mysqldump options didn’t account for certain special characters that were getting munged. The way around that is to use this option. If you have blobs, you really need this.

Conclusion

All the five things that most data engineers overlook while working with MySQL dumps are explained in this article and we hope you should focus on these 5 things and never overlook them after reading this article. If you still have anything to ask regarding this topic, feel free to share your question with us in the comment section below and we’ll reach you as soon as we can. Thanks for reading!