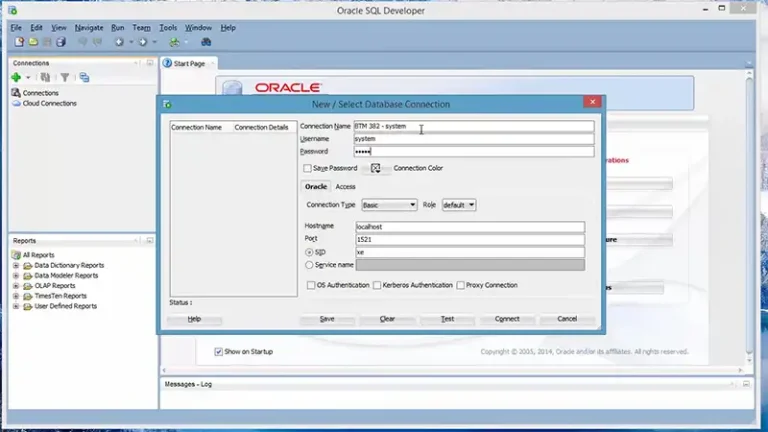

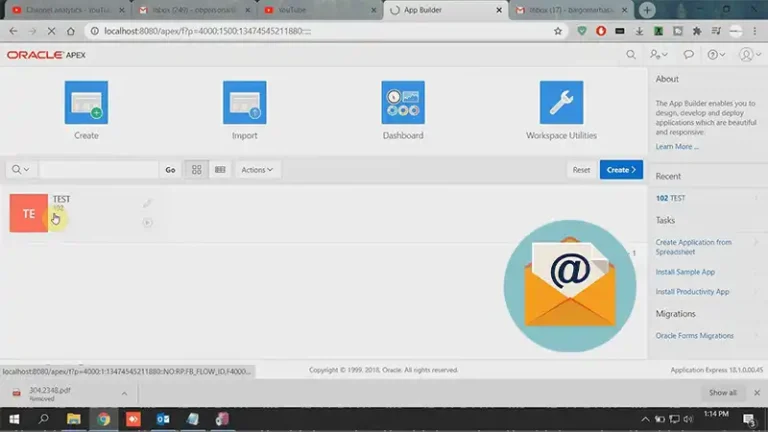

How to Create a Database in Oracle SQL Developer | What to Do?

Oracle SQL Developer is a powerful Integrated Development Environment (IDE) for Oracle Database. It’s a blessing if you’re a database administrator or a developer working with Oracle systems. With the user-friendly interface of SQL Developer, you can easily manage and interact with your Oracle Database. This article will walk you through the steps to create…